[RETIRED]HPC System Utilization

User projects

From time to time RRZE or now NHR@FAU asks its HPC customers to provide a short report of the work they are doing on the HPC systems. You can find an extensive list of ongoing and past user projects on our User Project Page.

HPC users and usage

RRZE operates big HPC systems (LiMa – shutdown end of 2018, Emmy – shutdown in 09/2022, Meggie, Fritz&Alex – since 2022), a throughput cluster (Woody) and specialized systems (TinyFAT, TinyGPU, Windows HPC – shutdown in June 2018). As of Q4/2022, there are in total are more than 2’000 nodes with more than 90’000 cores and almost 400 TB of (distributed) main memory as well as more than 10 PB of disk storage in five storage systems plus a transparently managed tape library for offline-storage.

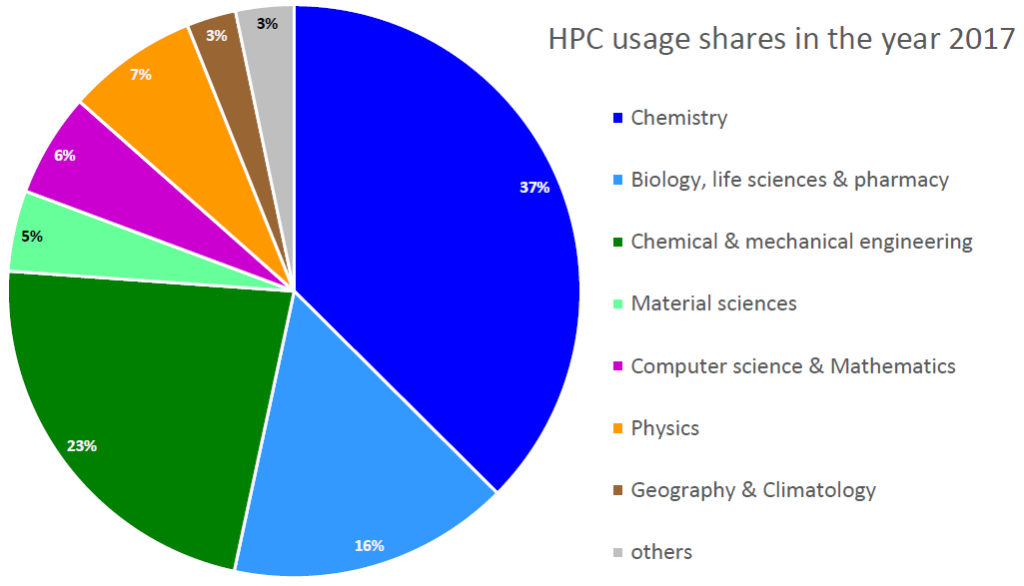

Highlights of 2017

In the year 2017 more than 550 accounts from almost 70 groups have been active (i.e. consumed CPU cycles) on RRZE’s HPC systems. This includes scientists from all five faculties of the University, students doing labs as part of their studies or for their final bachelor or master thesis, as well as a few users from regional universities and colleges or external collaborators. In total about 200 million core hours have been delivered to 1.7 million jobs.

Highlights of 2018

The year 2018 was marked by a significant extension of storage capacity through a “shareholder NFS server”. TinyGPU has been extended by several user groups to include up-to-date (consumer) GPUs. But we also had to shutdown two systems (LiMa and Windows HPC). Both were up and running for more than eight years. Over the years, LiMa served more than one thousand users and delivered almost 300 million core hours to 2.6 million jobs.

In the year 2018 almost 600 accounts from more than 70 groups have been active (i.e. consumed CPU cycles) on RRZE’s HPC systems. This includes scientists from all five faculties of the University, students doing labs as part of their studies or for their final bachelor or master thesis, as well as a few users from regional universities and colleges or external collaborators. In total more than 200 million core hours have been delivered to almost 1.6 million jobs.

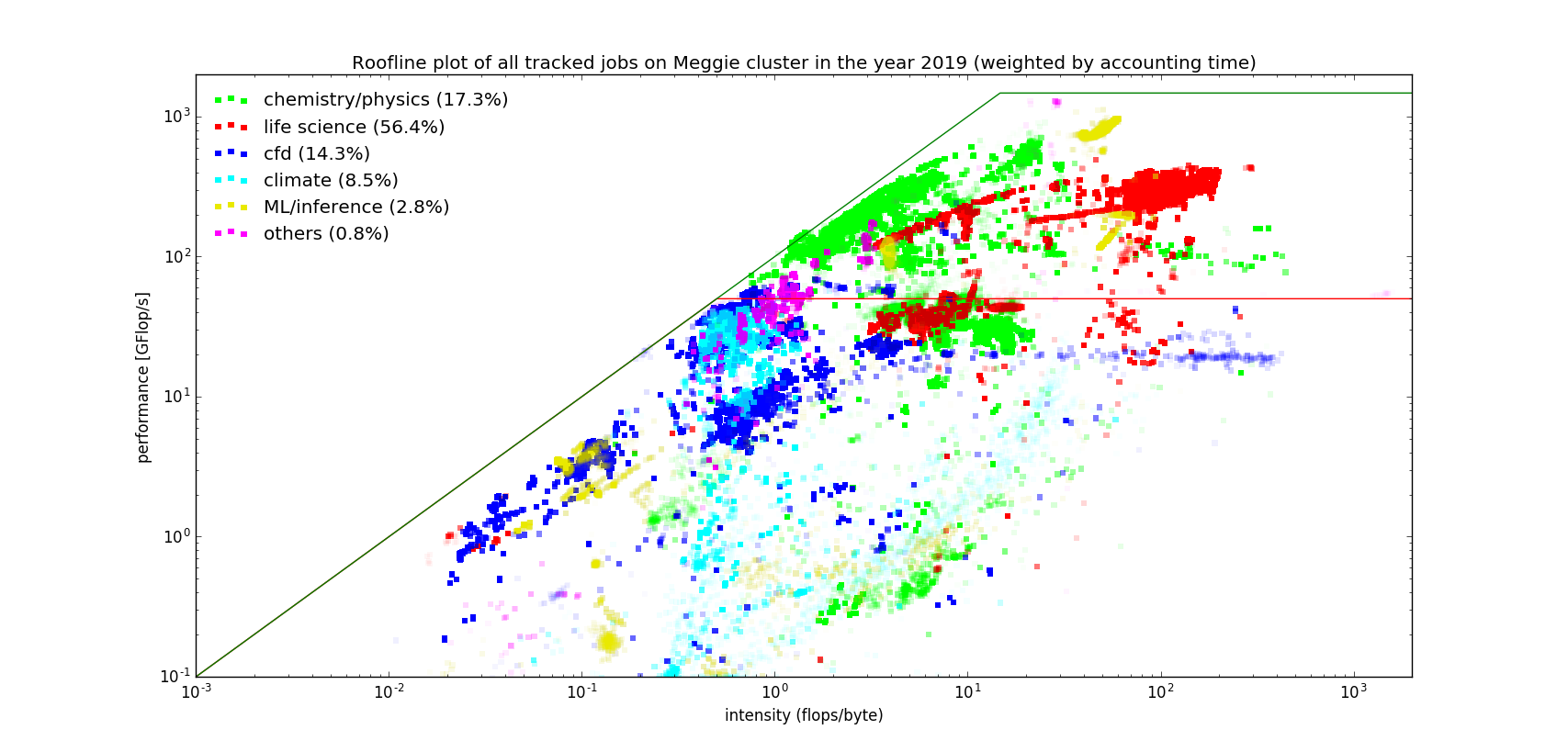

Highlights of 2019

In the year 2019 more about 530 accounts from 70 groups have been active (i.e. consumed CPU cycles) on RRZE’s HPC systems. This includes again scientists from all five faculties of the University, students doing labs as part of their studies or for their final bachelor or master thesis, as well as a few users from regional universities and colleges or external collaborators. In total about 215 million core hours have been delivered to more than 1.2 million jobs.

Only “minor” hardware extensions occurred during the year 2019: 12 additional nodes with 48 NVidia RZX2080Ti GPUs for TinyGPU (+51%; all financed by individual user groups), yet an other “shareholder NFS server” (+450 TB), and finally an addition of 112 nodes to the Woody throughput cluster (+64%) financed by the ending excellence cluster EAM and an upcoming CRC. The grand proposal for FAU’s next big parallel cluster (4.5 mio EUR) is under review by DFG and we hope to get the approval in early 2020.

Highlights of 2020

In the year 2020 our DFG application was approved and we succeeded with our NHR application. The tender for a big procurement (8 Mio EUR for a parallel computer and GPGPU cluster) was started in autumn. Several groups again financed significant extensions of TinyGPU and TinyFAT. These new nodes also mark the transition from Ubuntu 18.04 to Ubuntu 20.04 and from Torque/maui to Slurm as batch system. Moreover, the HPC storage system for $HOME and $VAULT has been replaced during summer 2020 – significantly increasing the online disk capacity to several PetaBytes.

The number of active accounts and groups further increased to 738 and 83, respectively. This includes again scientists from all five faculties of the University, students doing labs as part of their studies or for their final bachelor or master thesis, as well as a few users from regional universities and colleges or external collaborators. In total about 213 million core hours have been delivered to more than 1.6 million jobs.

Highlights of 2021

Our work in the year 2021 was dominated with the transition of the HPC services from RRZE to NHR@FAU. The newly established Erlangen National Center for High Performance Computing (NHR@FAU) is now not only in charge of the national services but also for all basic Tier3 HPC services of FAU. Dedicated financial resources are available for the basic Tier3 services; however, we exploit as much synergies in purchasing and operating the hardware as well as user services. Thus, from the outside NHR@FAU looks like one unit despite the different financing sources and duties. Besides this smooth transition in the background, also the procurement of the new NHR/Tier3 systems Alex (GPGPU cluster) + Fritz (parallel computer) has been an all year long task. It originally was planned to get Alex + Fritz operational in Q3/2021. However, due the world wide shortage not only of IT equipment but also many other supply, only Alex could be brought into early-access mode by the end of 2021.

The number of active accounts and groups was on a similar level as in the past years. NHR@FAU also could welcome first national users. Luckily, also the staff of NHR@FAU could be increased by several people.

Preliminary highlights of 2022

Alex + Fritz became fully operational and, both, have already been extended by additional nodes. The peak power consumption of all HPC systems on some days exceeds 1 MW. On Fritz we sometimes see in regular production more than 1 PFlop/ sustained performance.

NHR is a major game changer with already 12 large-scale projects, 30 normal projects, and 13 test/porting projects from all over Germany.

Research projects associated with any of the HPC clusters except Alex+Fritz (items occur multiple times if a field is mentioned for multiple clusters) – extracted from FAU’s CRIS database

-/-

Research projects associated with the Tier/NHR systems Alex+Fritz (items occur multiple times if a field is mentioned for multiple clusters) – extracted from FAU’s CRIS database

No equipment found.

Publications associated with any of the HPC clusters except Alex+Fritz (items occur multiple times if a publication is liked to multiple clusters) – extracted from FAU’s CRIS database

No equipment found.

Publications associated with the Tier/NHR systems Alex+Fritz (items occur multiple times if a field is mentioned for multiple clusters) – extracted from FAU’s CRIS database

No equipment found.